Alice Weekly Meeting: Software for Hardware Accelerators / PDP-SRC

-

-

10:00

→

10:20

Discussion 20mSpeakers: David Rohr (CERN), Giulio Eulisse (CERN)

Color code: (critical, news during the meeting: green, news from this week: blue, news from last week: purple, no news: black)

High priority Framework issues:

- Start / Stop / Start: 2 problems on O2 side left:

-

- All processes are crashing randomly (usually ~2 out of >10k) when restarting. Stack trace hints to FMQ. https://its.cern.ch/jira/browse/O2-4639

- TPC ITS matching QC crashing accessing CCDB objects. Not clear if same problem as above, or a problem in the task itself:

- All processes are crashing randomly (usually ~2 out of >10k) when restarting. Stack trace hints to FMQ. https://its.cern.ch/jira/browse/O2-4639

-

- Stabilize calibration / fix EoS: New scheme: https://its.cern.ch/jira/browse/O2-4308:

- Test last week was wrong due to misunderstanding between GIulio and me about which software to use :(.

- New version seems to work well, except one feature we could not test yet: to keep running the calibration after the data processing timeout.

- Some improvements for infoloogger messages to be done, Giulio will do in follow-up PRs.

- Fix problem with ccdb-populator: no idea yet - since Ole left, someone else will have to take care.

- TF-status message (from https://github.com/AliceO2Group/AliceO2/pull/13495) sent by readout-proxy. Status?

Sync reconstruction

- Waiting for RC to test COSMIC replay data set.

- Waiting for RC to test STOP timeout impact.

- Problem that EPN2EOS is not working, could be due to CTF files now written with wrong permission?

- New SW version deployed at P2, running on Alma9, and has the new CRITICAL FairLogger severity.

Async reconstruction

- Remaining oscilation problem: GPUs get sometimes stalled for a long time up to 2 minutes. Checking 2 things:

- does the situation get better without GPU monitoring? --> Inconclusive

- We can use increased GPU processes priority as a mitigation, but doesn't fully fix the issue.

- ḾI100 GPU stuck problem will only be addressed after AMD has fixed the operation with the latest official ROCm stack.

- Ready now to test on MI100 with new setup if problem persists, asked Catalin to start a test run.

- Limiting factor for pp workflow is now the TPC time series, which is to slow and creates backpressure (costs ~20% performance on EPNs). Enabled multi-threading as recommended by Matthias - need to check if it works.

AliECS related topics:

- Extra env var field still not multi-line by default., created https://its.cern.ch/jira/browse/OGUI-1624 to follow this up seperately from other tickets.

- FLP working on this, but not yet deployed.

GPU ROCm / compiler topics:

- List of important issues with AMD:

- Random server reboots on MI100: Tried several workarounds, but no solution found so far. Giada spotted some weird FairMQ problems in the large scale test, which could probably be due to some memory corruption happening.

- Disappeared with Alma 9.5, AMD might still check with our Alma 9.4 / ROCm 6.2 server to understand root cause.

- Random crashes on MI100 due to memory error, can be worked around by serializing all kernel and DMA transfers, which has 20% performance degradation.

- Still there with our latest setup. Only persisting problem. Deployed my serialization workaround automatically for online.

- AMD proposed a different workaround to disable the DMA engine. It works for working around this bug, but has another problem that GPUs get stuck. Sent a reproducer to AMD, although not important for us. We want a proper fix anyway.

- Debugged in detail what is going on: multiple commands enqueued in a command queue get executed in parallel or in the wrong order, so that data is read before it is written. Clearly synchronization problem on AMD side.

- Miscompilation leading to crashes, worked around by changing our code, but compiler bug still there.

- Not appearing any more with ROCm 6.3.2, not clear if fixed, AMD might check the reproducer with the old ROCm.

- Provide an RPM ROCm version with all fixes, so that we don't need to compile clang manually with custom patches.

- No compiler patch or HIP patch necessary any more for ROCm 6.3.2, big step ahead. Still need new version which proper fix for the memory error.

- Proper way to enable amdgpu-function-calls instead of hacking AMD scripts and binaries.

- Can now do everything with CMake HIP language and Clang. Got rid of the hipcc binary, and thus of the hacks to enable function calls.

- hipHostRegister has become very slow when more than 1 GPU visible (via ROCR_VISIBLE_DEVICES).

- Fixed with ROCm 6.3.2

- Discussed with AMD the meaning of a couple of workarounds we are applying. Once we are stable, should remove some and see if it is still needed.

- Random server reboots on MI100: Tried several workarounds, but no solution found so far. Giada spotted some weird FairMQ problems in the large scale test, which could probably be due to some memory corruption happening.

- Damon from AMD is back working for us, and will look at the GPU memory error next.

- SLC9 GPU container in Jenkins working and RPM publishing fixed. Set up as secondary container for online builds (since we still need the old container for the infra node) and switched to a unified SLC9 container for offline builds (can run on the GRID and on the EPNs with GPU).

- Updated vobox to use el9 container, reported a bug to Max about a missing library that was fixed. MI50s now working for offline in the new setup, MI100 to be tested.

- Try to find a better solution for the problem with __device__ inline functions leaking symbols in the host code.

- Added some functionality to CAMath class that was needed for BFloat16 for NN clusterizer.

- Can now bump to GCC 14, PR reopened. Suppressed one set of bogus compiler warnings due to GCC regressions. Now some other GC14 warnings in simulation code, pinged Sandro.

TPC / GPU Processing

- WIP: Use alignas() or find a better solution to fix alignment of monte carlo labels: https://its.cern.ch/jira/browse/O2-5314

- Waiting for TPC to fix bogus TPC transformations for good, then we can revert the workaround.

- Waiting for TPC to check PR which uses full cluster errors including average charge and occupancy map errors during seeding.

- Final solution: merging transformation maps on the fly into a single flat object: Still WIP

- Pending OpenCL2 issues:

- printf not working due to confirmed bug in clang, fix is being prepared. Prevents further debugging for now.

- GPU MemClean not working in TPC clusterization, need to debug.

- Crash in merger, which can be worked around by disabling clang SPIRV optimization. Probably bug in clang, but need to fix printf first to debug.

- Also with optimization disabled, crashing later in TPC merging, need printf to debug.

- printf not working due to confirmed bug in clang, fix is being prepared. Prevents further debugging for now.

- Solved memset issue with OpenCL, but Clusterizer still gives slightly different clusters running on OpenCL.

- Felix reported the problem is due to off-by-one offset in NoiseSuppression. Need to check how that can happen only in OpenCL.

- Next high priority topic: Improvements for cluster sharing and cluster attachment at lower TPC pad rows.

Other Topics

- Hiring new fellow: open until 17.3., 7 applications so far, 4 of them declined by HR for formal reasons.

EPN major topics:

- Fast movement of nodes between async / online without EPN expert intervention.

- 2 goals I would like to set for the final solution:

- It should not be needed to stop the SLURM schedulers when moving nodes, there should be no limitation for ongoing runs at P2 and ongoing async jobs.

- We must not lose which nodes are marked as bad while moving.

- 2 goals I would like to set for the final solution:

- Interface to change SHM memory sizes when no run is ongoing. Otherwise we cannot tune the workflow for both Pb-Pb and pp: https://alice.its.cern.ch/jira/browse/EPN-250

- Lubos to provide interface to querry current EPN SHM settings - ETA July 2023, Status?

- Improve DataDistribution file replay performance, currently cannot do faster than 0.8 Hz, cannot test MI100 EPN in Pb-Pb at nominal rate, and cannot test pp workflow for 100 EPNs in FST since DD injects TFs too slowly. https://alice.its.cern.ch/jira/browse/EPN-244 NO ETA

- DataDistribution distributes data round-robin in absense of backpressure, but it would be better to do it based on buffer utilization, and give more data to MI100 nodes. Now, we are driving the MI50 nodes at 100% capacity with backpressure, and then only backpressured TFs go on MI100 nodes. This increases the memory pressure on the MI50 nodes, which is anyway a critical point. https://alice.its.cern.ch/jira/browse/EPN-397

- TfBuilders should stop in ERROR when they lose connection.

- Allow epn user and grid user to set nice level of processes: https://its.cern.ch/jira/browse/EPN-349

- Online and Async updated to Alma9.5, Infrastructure nodes to follow.

Other EPN topics:

- Check NUMA balancing after SHM allocation, sometimes nodes are unbalanced and slow: https://alice.its.cern.ch/jira/browse/EPN-245

- Fix problem with SetProperties string > 1024/1536 bytes: https://alice.its.cern.ch/jira/browse/EPN-134 and https://github.com/FairRootGroup/DDS/issues/440

- After software installation, check whether it succeeded on all online nodes (https://alice.its.cern.ch/jira/browse/EPN-155) and consolidate software deployment scripts in general.

- Improve InfoLogger messages when environment creation fails due to too few EPNs / calib nodes available, ideally report a proper error directly in the ECS GUI: https://alice.its.cern.ch/jira/browse/EPN-65

- Create user for epn2eos experts for debugging: https://alice.its.cern.ch/jira/browse/EPN-383

- EPNs sometimes get in a bad state, with CPU stuck, probably due to AMD driver. To be investigated and reported to AMD.

- Understand different time stamps: https://its.cern.ch/jira/browse/EPN-487

- Start / Stop / Start: 2 problems on O2 side left:

-

10:20

→

10:25

Following up JIRA tickets 5mSpeaker: Ernst Hellbar (CERN)

-

10:25

→

10:30

TPC ML Clustering 5mSpeaker: Christian Sonnabend (CERN, Heidelberg University (DE))

Developments

- Tested behaviour when setting / removing split flags for all clusters

- Ongoing: PR in O2 for GPU cluster finder

- Unknown status: PR in alidist for ONNX update (?)

Setting the split flags

(Setting the split flags for the NN exactly in the same way as the CF deconvolution kernel does)

- Also percentiles look better when regression model is used, even for 2D regression models (best one is still 3D CNN with (5,11,11) input)

- The network clearly improves all distributions, but let's make sure that this is not a fluke:

Removing the split flags (for both algorithms)

- The network is clearly better

What happens when many clusters are rejected?

- (Setting the flags again)

- Rejecting about 13.4% of clusters and about 4.5% of tracks

- Keeping CF deconvolution flags for direct comparability

- Most tracks rejected are with NCL < 40 and hence not relevant for physics (typical analysis cut: 60 NCl / track)

- Do we loose any physics-relevant tracks? No -> see plot below

- Still need to understand where we loose some tracks with high NCL (or better: shift them to lower NCL due to "false" cluster rejection)

Update

- Using patch from David to calculate Chi2 without cluster flags (note the different axis scaling. Chi2/NCL is now a lot bigger)

- Network is still better

-

10:30

→

10:35

ITS Tracking 5mSpeaker: Matteo Concas (CERN)

-

10:35

→

10:45

TPC Track Model Decoding on GPU 10mSpeaker: Gabriele Cimador (Universita e INFN Torino (TO))

General summary on GPU param optimisation

Can we optimize parameters individually, and which parameters do we have to optimize globally?

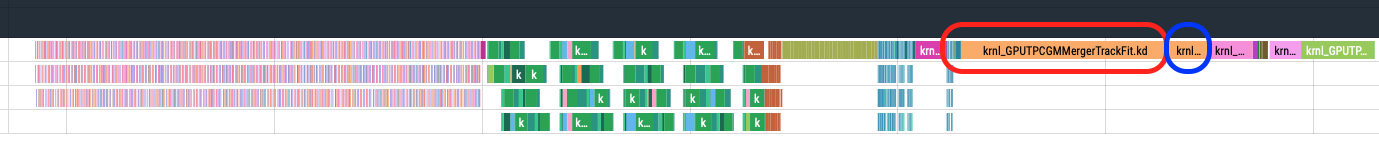

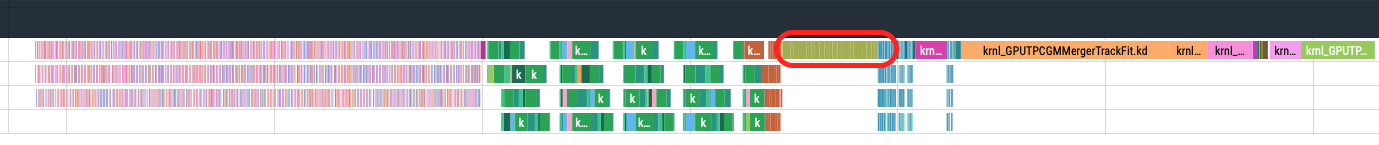

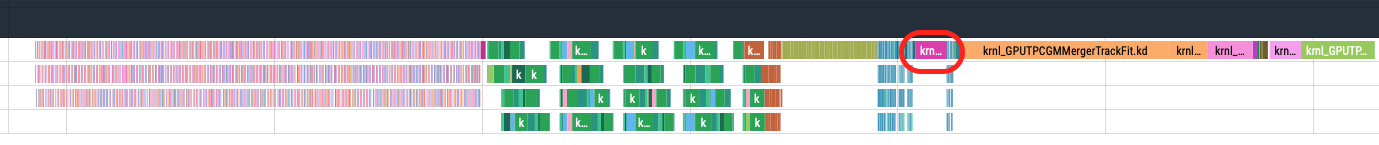

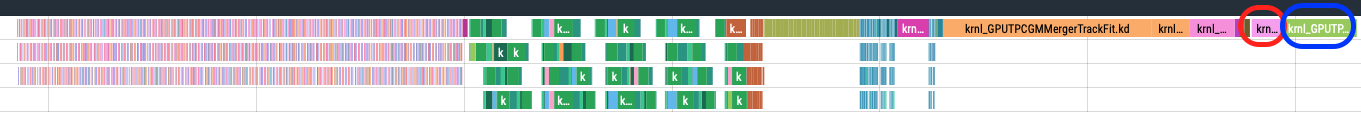

Image below is the GPU sync TPC processing chain. Each colored box is a GPU kernel, time flows in this direction -->.

Drawn following conclusions:

- Compression and decompression steps: these steps contain kernels which do not execute concurrently. Parameters are independent and can be optimised separately.

- Clusterizer step: small concurrent kernels, dependent parameters, need global optimisation.

-

TrackingSlices step: medium concurrent kernels, dependent parameters, need global optimisation.

- Merger step: mix of medium/long single stream kernels and small concurrent kernels. Some parameters can be optimisied individually while concurrent kernels require global opt.

Are the optimal parameters the same for different input data pp vs PbPb and low vs high IR?

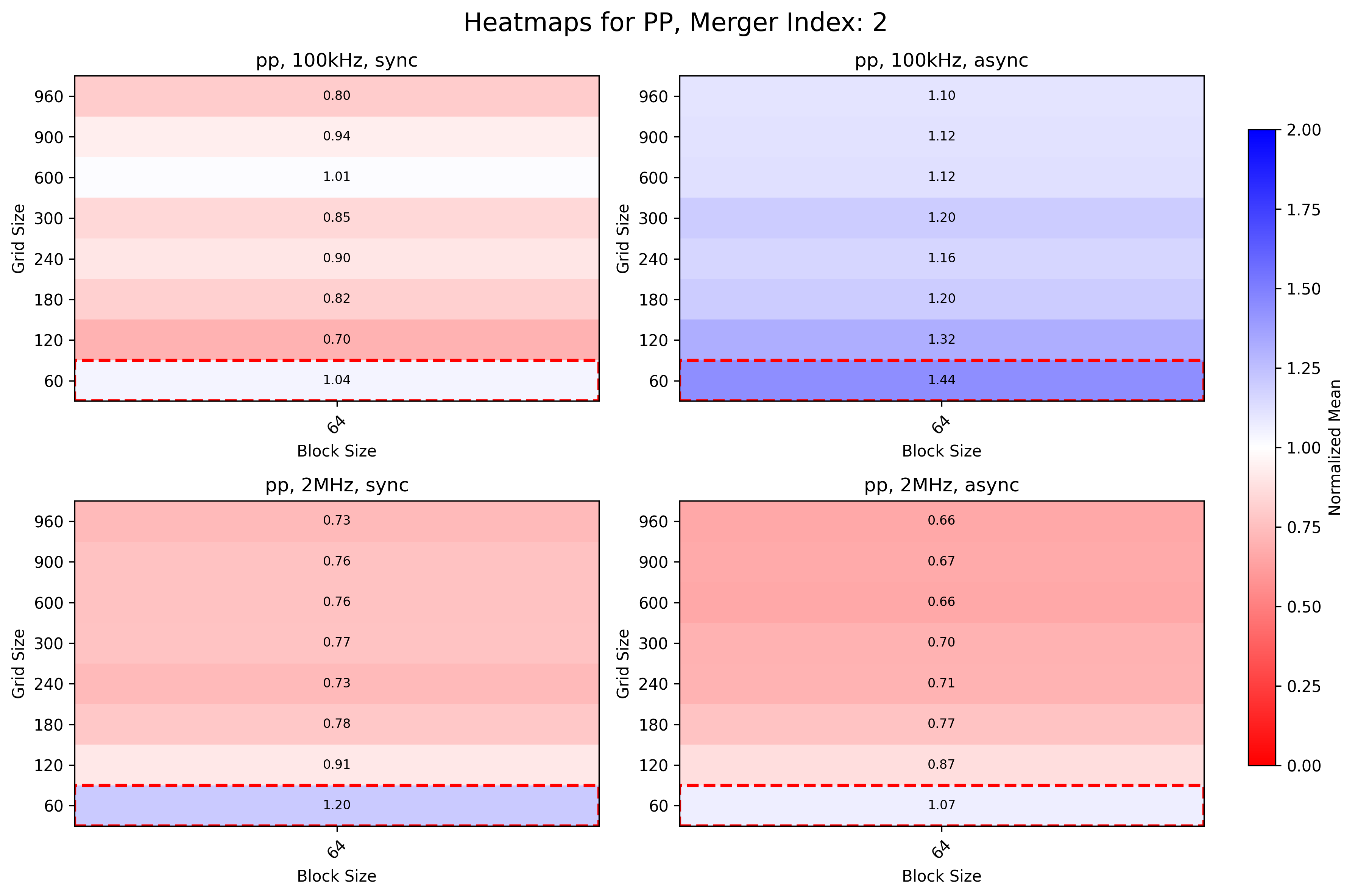

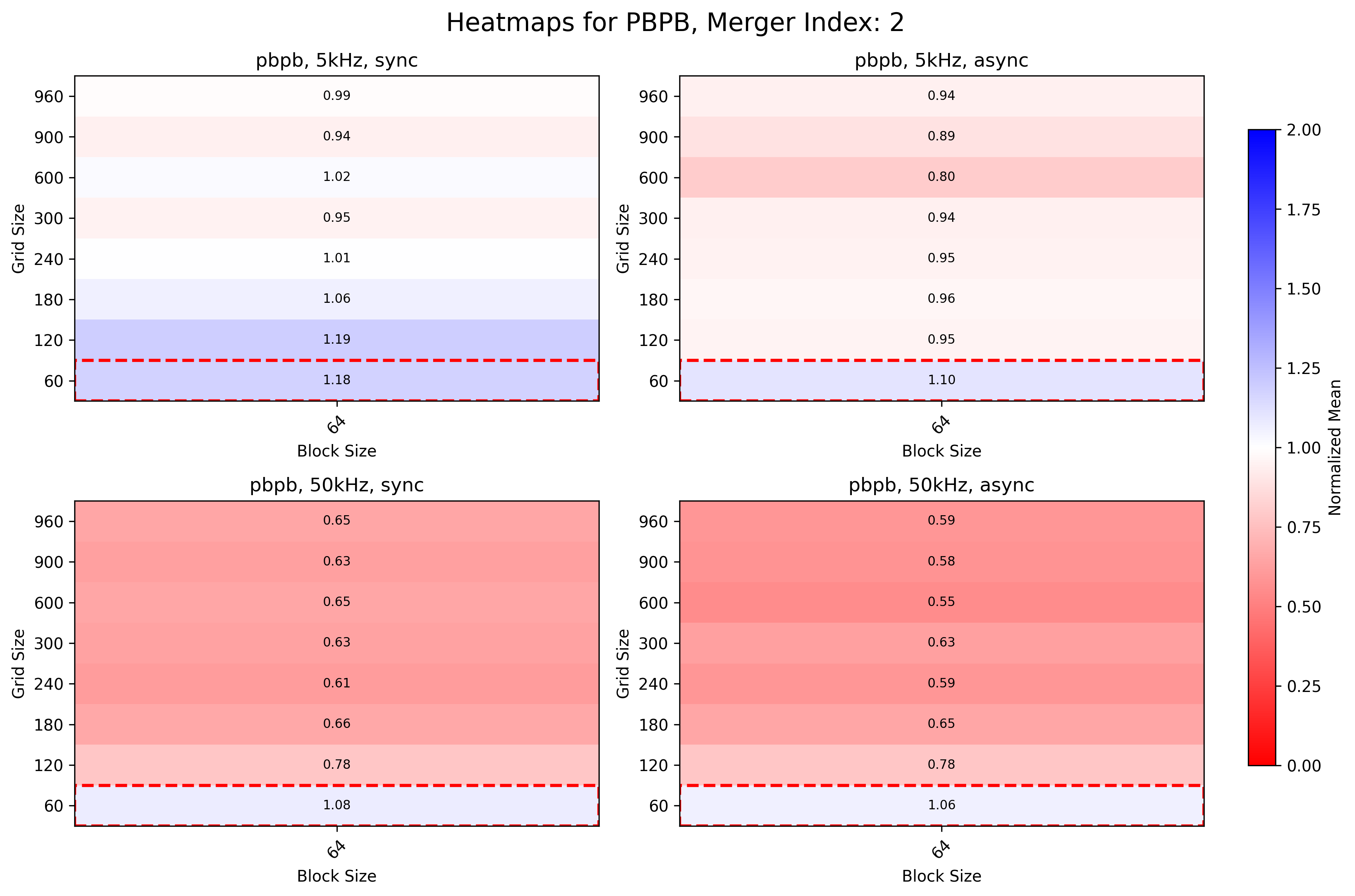

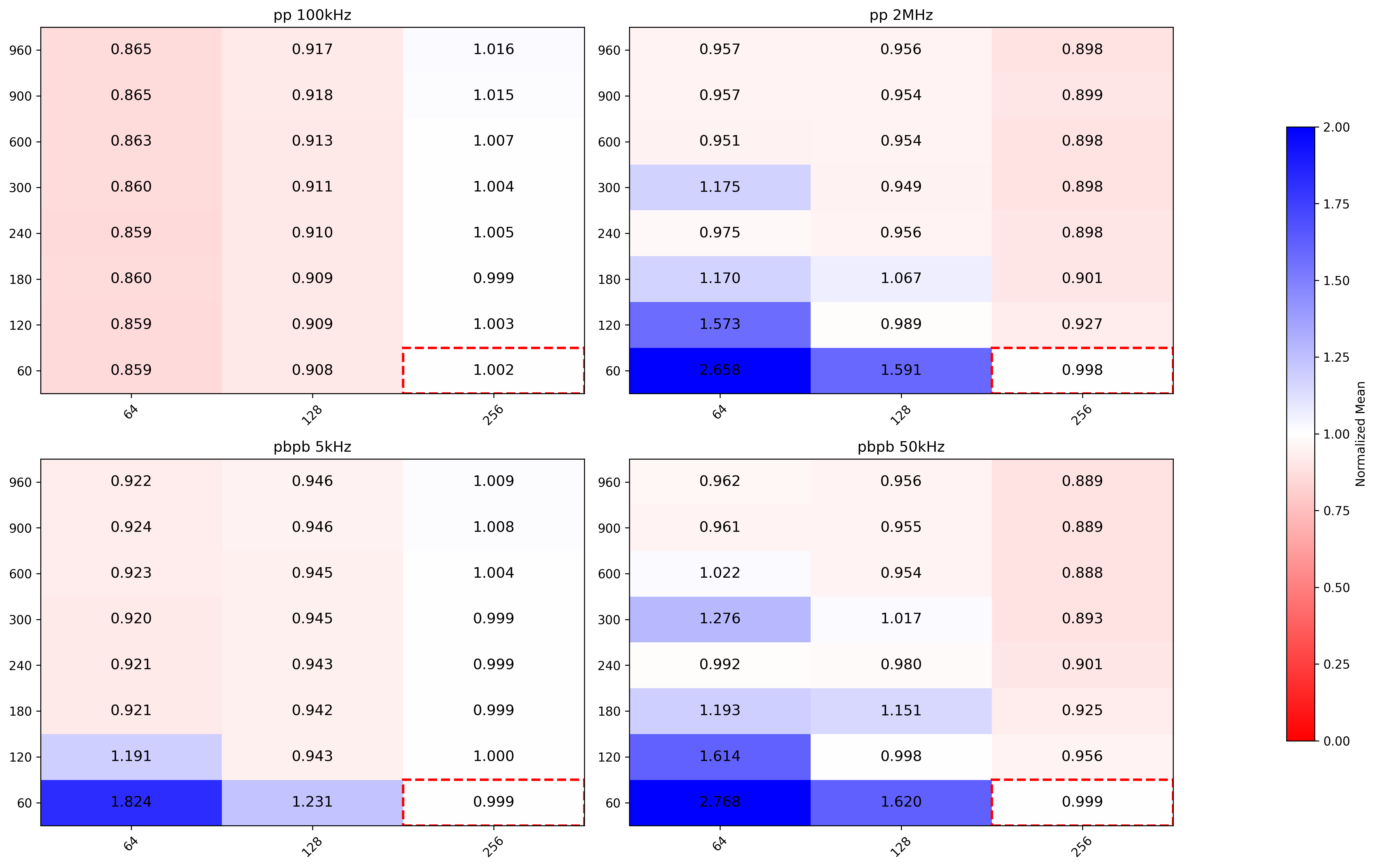

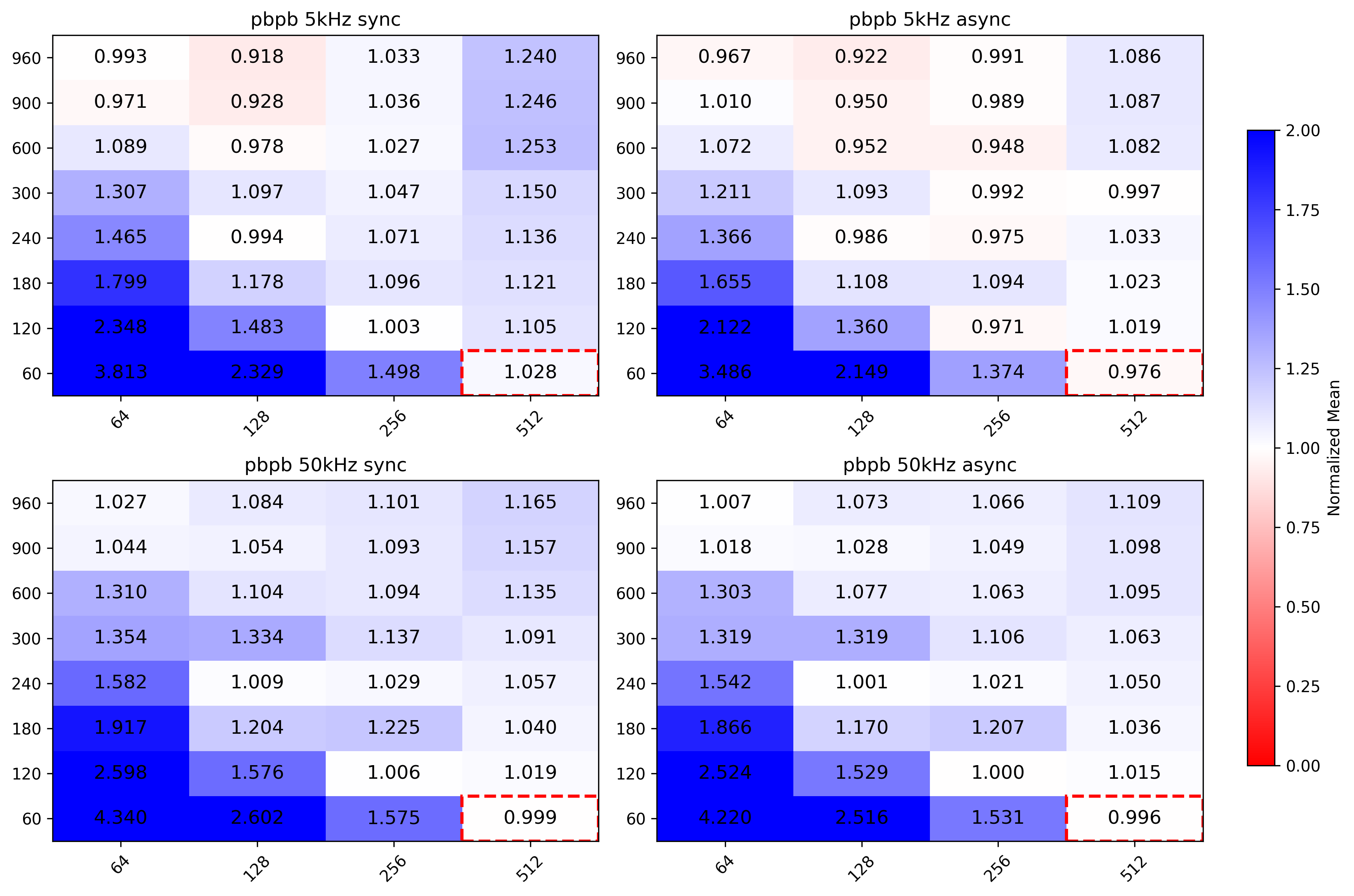

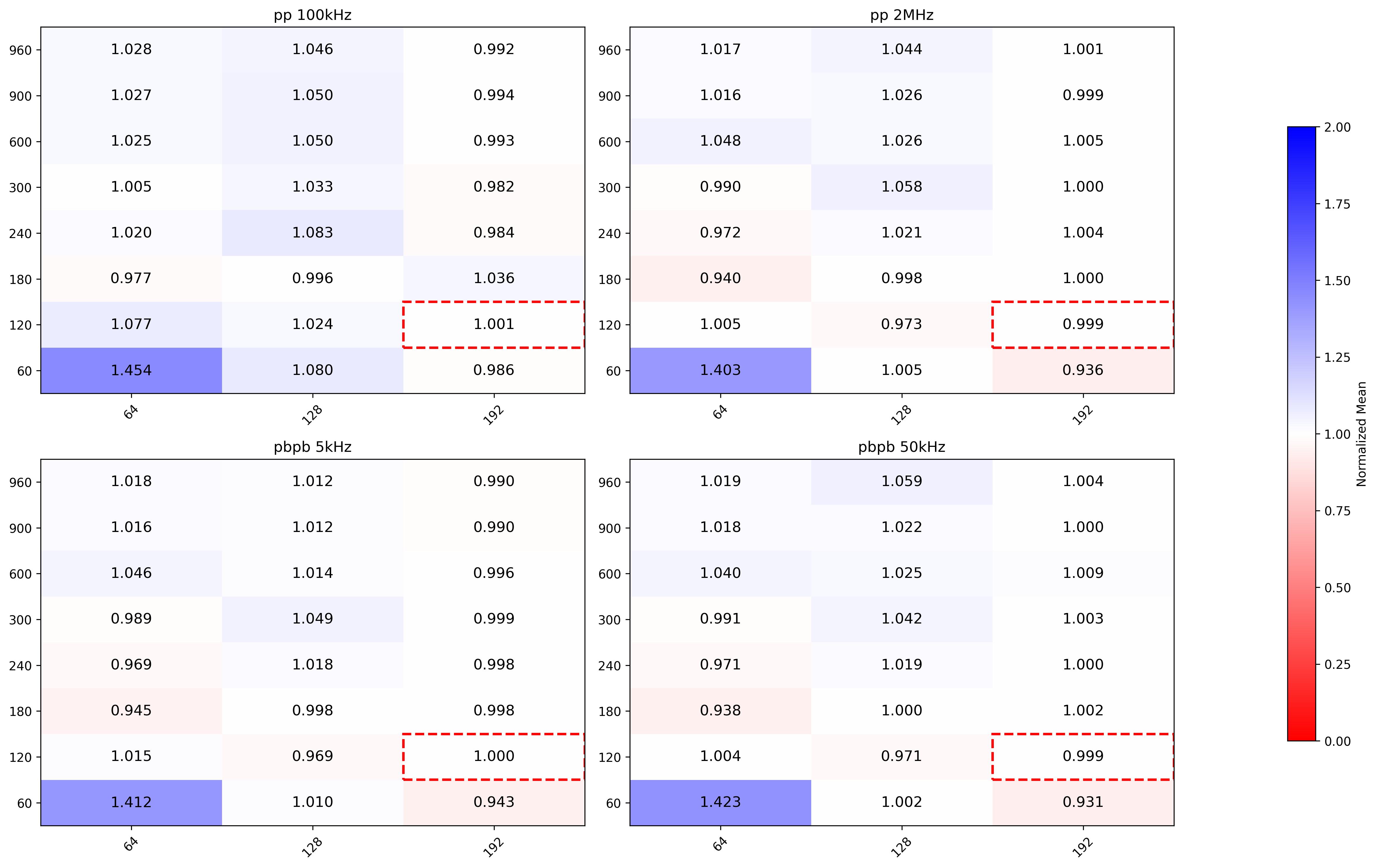

Measured on Alma 9.4, ROCm 6.3.1, MI50 GPU. Tested four different configurations: pp 100kHz, pp 2MHz, PbPb 5kHz and PbPb 50kHz. Simulated TFs with 128 lhc orbits.

Independent params optimisation

- Grid search approach. Block size is multiple of warp size (64 for AMD EPN GPUs), Grid size is multiple of number of Streaming Multiprocessors (Compute Units in AMD jargon).

- Each indepedent kernel has a custom search space, and can be studied separately from the others

- Created automated measurements routine, capable of executing multiple grid searches on different independent kernels

-

Executed grid search for the following kernels:

-

MergerTrackFit

-

MergerFollowLoopers

-

MergerSliceRefit

-

MMergerCollect

-

CompressionKernels_step0attached

-

CompressionKernels_step1unattached

-

MergerTrackFit

Executed two times (Merger 1 and Merger 2)

pp

Merger 1

- Low IR same performance as normal configuration (grid size dependent on number of tracks)

- High IR same as low IR, except for (64,240) where it also has the same performance as normal

Merger 2

- Low and High IR sync benefits from bigger grid sizes

- High IR async is 34% faster with higher grid sizes than current configuration for async

PbPb

Merger 1

- Larger grid sizes almost reaches current configuration (grid_size * block_size >= n_tracks)

Merger 2

- Low IR can be 10% faster with bigger grid sizes

- High IR is 40% faster with bigger grid sizes

MergerSliceRefit

Kernel is executed 36 times (once per TPC sector).

- pp low IR benefits from lower block sizes

- pp high IR benefits from larger grid and block sizes

- PbPb low IR better with lower block sizes

- PbPb high IR better with larger grid and block sizes

MergerCollect

pp

Overall best performance given by (64, 960), while current configuration is (512,60).

PbPb

Roughly same as pp

MergerFollowLoopers

Best configuration uses 900 or 960 as grid size. Current configuration is (256,200).

Compression kernels

Step 0 attached clusters

No significant improvements when changing grid and block sizes.

Step 1 unattached clusters

No significant improvements when changing grid and block sizes.

After grid search

Create set of best parameters per

beamtype(pp, PbPb) and perIR(100kHz, 2MHz for pp and 5kHz, 50kHz for PbPb). How to choose best configuration:- compute

conf_mean_time - default_conf_mean_time - propagate error (std dev) of the difference and compute 95% confidence interval

- if 0 is in the interval, can not tell with confidence if current configuration is better than the default

- if one or more CIs have upperbound < 0, choose the one with smaller mean (i.e. the best)

Plug in the best parameters for each beamtype / IR configuration and check if there is a noticable improvement in the whole sync / async chain (work in progress).

Dependent params optimisation

- More difficult to tackle. Group kernels which run in parallel and optimise this set.

- Identified following kernels which are the longest which are concurrently executed with other kernels:

- CreateSliceData

- GlobalTracking

- TrackletSelector

- NeighboursFinder

- NeighboursCleaner

- TrackletConstructor_singleSlice

- Started with grid search approach on TrackletConstructor_singleSlice. Measured both kernel mean execution time and whole SliceTracking execution time, as chaning parameters may influence the execution time of other kernels and thus on the whole SliceTracking slice.

- Block size is multiple of warp size (64 for AMD EPN GPUs), Grid size is multiple of number of Streaming Multiprocessors (Compute Units in AMD jargon).

Possible ideas for post manual optimization

- Isolate the parameters which are dependent, i.e. kernels from the same task which run in parallel (e.g. Clusterizer step, SliceTracking slice)

- Apply known optimization techniques to such kernel groups

- Grid/random search

- Bayesian optimization?

See: F.-J. Willemsen, R. Van Nieuwpoort, and B. Van Werkhoven, “Bayesian Optimization for auto-tuning GPU kernels”, International Workshop on Performance Modeling, Benchmarking and Simulation of High Performance Computer Systems (PMBS) at Supercomputing (SC21), 2021. Available: https://arxiv.org/abs/2111.14991

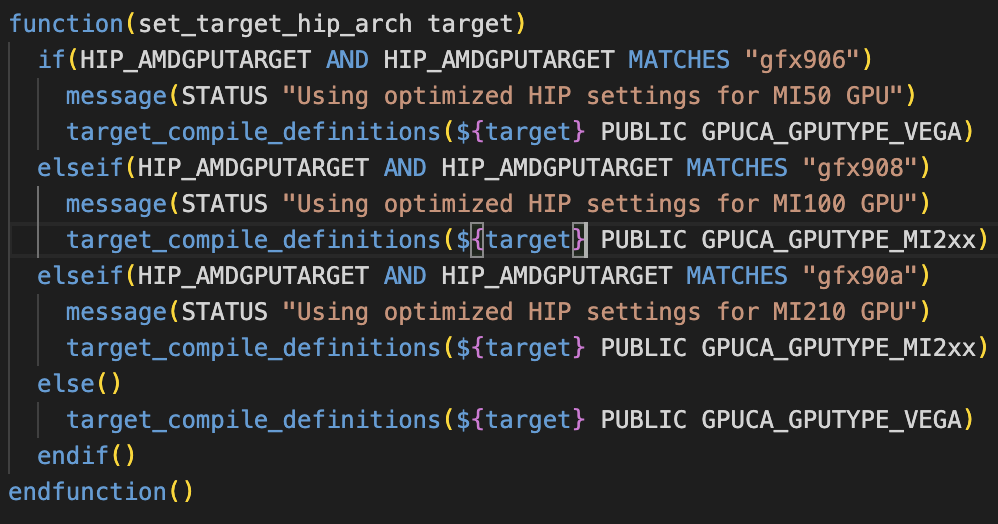

Possible bug spotted

HIP_AMDGPUTARGET set to "default" in GPU/GPUTracking/Standalone/cmake/config.cmake translates in HIP_AMDGPUTARGET=gfx906;gfx908 and forces to use MI50 params

Basically here HIP_AMDGPUTARGET=gfx906;gfx908 enters the first if clause for MI50 even if I am compiling for MI100. Commented set(HIP_AMDGPUTARGET "default") on the config.cmake of the standalone benchmark and forced usage of MI100 parameters via

cmake -DCMAKE_INSTALL_PREFIX=../ -DHIP_AMDGPUTARGET="gfx908" ~/alice/O2/GPU/GPUTracking/Standalone/

Did not investigate further on this.

-

10:45

→

10:55

Efficient Data Structures 10mSpeaker: Dr Oliver Gregor Rietmann (CERN)

-

10:00

→

10:20