US ATLAS Computing Facility

Facilities Team Google Drive Folder

Zoom information

Meeting ID: 996 1094 4232

Meeting password: 125

Invite link: https://uchicago.zoom.us/j/99610944232?pwd=ZG1BMG1FcUtvR2c2UnRRU3l3bkRhQT09

-

-

13:00

→

13:10

WBS 2.3 Facility Management News 10mSpeakers: Robert William Gardner Jr (University of Chicago (US)), Dr Shawn Mc Kee (University of Michigan (US))

-

13:10

→

13:20

OSG-LHC 10mSpeakers: Brian Hua Lin (University of Wisconsin), Matyas Selmeci

- gratia-probe-1.23.2 bug affecting non-HTCondor sites, preventing pilot record accounting record upload. Any sites with this version installed should update immediately to gratia-probe-1.23.3 and grep for mapped VO Unix names in /var/lib/gratia/data/quarantine/. If any records are found, please contact help@opensciencegrid.org

- HTCondor-CE 5.1.1, HTCondor 9.0.1, and XRootD 5.2.0 are available in osg-upcoming-testing

-

13:20

→

13:35

Topical ReportsConvener: Robert William Gardner Jr (University of Chicago (US))

- 13:35 → 13:40

-

13:40

→

14:00

WBS 2.3.2 Tier2 Centers

Updates on US Tier-2 centers

Convener: Fred Luehring (Indiana University (US))-

13:40

AGLT2 5mSpeakers: Philippe Laurens (Michigan State University (US)), Dr Shawn Mc Kee (University of Michigan (US)), Prof. Wenjing Wu (University of Michigan)

Getting ready for downtime and infrastructure update starting 8am Monday 14-Jun-2021

- Updating the topology in OSG/git to prepare downtime (thanks to Brian, Ofer and Mark)

- UM will (finish to) replace all the main switches, all cabling (all fiber where possible), and configuration.

Some servers and worker nodes will need to be relocated.- MSU will be moving all services and dcache storage to the MSU Data Center Monday-Tuesday (Wave 1)

to coincide with UM downtime.

Our public and private networks are now extended from our old EX9208 to our 2 new QFX5120 at the DC.

2 nodes (Wave 0) were moved this Monday to iron out networking issues.

Some multicast (for ganglia) and stability issues were discovered and fixed.

The T2 WNs (and the MSU T3) will be moved over time (Wave 2, etc).

Moving the last set of worker nodes will need to be synchronized with the move

of the department servers sharing the same cooling (otherwise CRACs would fail on too-cold air return).- The UM-MSU link will unfortunately not be switched over to the new State Research Coridor Triangle at this time.

The MSU multi 100G Research Network will also not be ready for cut-over until at least July.- Optimistically we may have dCache back on Wednesday

-

13:45

MWT2 5mSpeakers: David Jordan (University of Chicago (US)), Jessica Lynn Haney (Univ. Illinois at Urbana Champaign (US)), Judith Lorraine Stephen (University of Chicago (US))

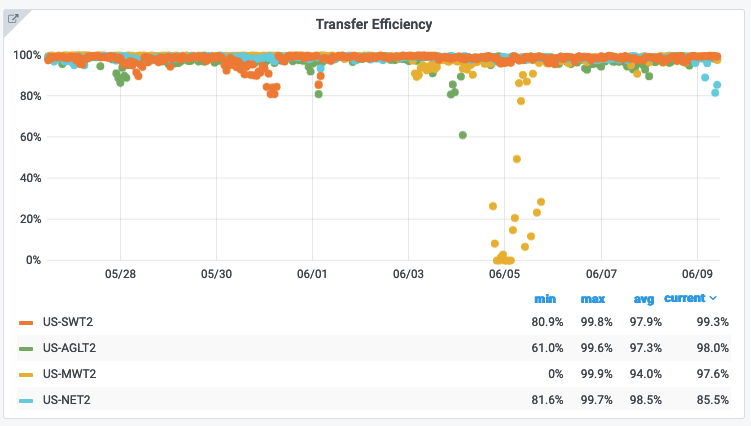

UC - loss of IPv6 connectivity to PanDA took site offline last Thursday until Sunday. Monday had loss of IPv6 connectivity from IU/UIUC to UC.

IU - new head node and perfSONAR servers are racked and ready to be brought online. Squid degradation for an expired k8s certificate on iut2-slate.

UIUC - working towards adding ICC's HTC resources.

-

13:50

NET2 5mSpeaker: Prof. Saul Youssef (Boston University (US))

Dealing with an issue right now....

GPFS getting overloaded => Hammercloud bounced us a couple of times yesterday. Currently ~30% ddm failures. Having to reboot one of our gridftp endpoints. Possibly DAOD physics validation jobs?xrd 5.2.0 with clustering and custom containers is working for the GPFS storage.

Preparing to buy worker nodes.

-

13:55

SWT2 5mSpeakers: Dr Horst Severini (University of Oklahoma (US)), Mark Sosebee (University of Texas at Arlington (US)), Patrick Mcguigan (University of Texas at Arlington (US))

-

13:40

-

14:00

→

14:05

WBS 2.3.3 HPC Operations 5mSpeaker: Lincoln Bryant (University of Chicago (US))

Pilot is failing to create log files at NERSC for a small percentage of jobs. This appears to block stage out for the entire job, I am investigating. Have been in communication with Tadashi regarding the stager. Still not understood why the pilot is failing to create a log file - I will also email Paul if there are issues after updating the pilot to latest version. Have reduced Harvester to a single queued job until I can reliably get jobs to complete again.

TACC is down for maintenance which is taking much longer than expected.

-

14:05

→

14:20

WBS 2.3.4 Analysis FacilitiesConvener: Wei Yang (SLAC National Accelerator Laboratory (US))

-

14:05

Analysis Facilities - BNL 5mSpeaker: William Strecker-Kellogg (Brookhaven National Lab)

-

14:10

Analysis Facilities - SLAC 5mSpeaker: Wei Yang (SLAC National Accelerator Laboratory (US))

- 14:15

-

14:05

-

14:20

→

14:40

WBS 2.3.5 Continuous OperationsConvener: Ofer Rind (Brookhaven National Laboratory)

- WebDAV and XRootD service added to OSG topology; WebDAV and XRootD service type mappings now added to CRIC as well.

- A number of questions about recent AGLT2 downtime settings and site configuration have been resolved

- MWT2 issue encountered trying to shorten downtime

- Testing with BNL dCache downtime today as well

- Mark is auditing site configurations

- Also looking at SRR settings

- XRootD 5.2 HTTP-TPC testbed updated at BNL and SWT2

- Status of deployment plan at OU?

- F-S DevOps meeting (minutes)

- Recent MWT2 squid downtime/cert issue

- Moving to normalize SLATE squid configuration going forward

- Discussion of support structure/processes

- Squid failover configurations (in general) - future topical presentation?

-

14:20

US Cloud Operations Summary: Site Issues, Tickets & ADC Ops News 5mSpeakers: Mark Sosebee (University of Texas at Arlington (US)), Xin Zhao (Brookhaven National Laboratory (US))

-

14:25

Service Development & Deployment 5mSpeakers: Ilija Vukotic (University of Chicago (US)), Robert William Gardner Jr (University of Chicago (US))

XCaches

- all updated to 5.2.0, moved to gStream monitoring

- since Squid wants one dedicated disk, I will be removing one disk from all xcaches and restarting everything

- SLATE deployment in Beijing should be ready by the end of month.

Squids

- created everything needed for alarm/alert generation, should be in chart before end of the week

Alarms & Alerts

- working fine, updates on Frontier alarm generating code.

Rucio devs:

- fixes to how Rucio gets client IP, how it calculates replica ordering

- work on setting up part of that infrastructure in Cloudflare.

- WebDAV and XRootD service added to OSG topology; WebDAV and XRootD service type mappings now added to CRIC as well.

-

14:40

→

14:45

AOB 5m

-

13:00

→

13:10