Alice Weekly Meeting: Software for Hardware Accelerators

-

-

10:00

→

10:20

Discussion 20mSpeaker: David Rohr (CERN)

Color code: (critical, news during the meeting: green, news from this week: blue, news from last week: purple, no news: black)

Sync reconstruction

Async reconstruction

- Need to investigate short GPU stall problem.

- Limiting factor for pp workflow is now the TPC time series, which is to slow and creates backpressure (costs ~20% performance on EPNs). Enabled multi-threading as recommended by Matthias - need to check if it works.

- Async reco test (using some 2024 Pb-Pb setup I copied from the GRID) were successful.

- Improved O2DPG scripts and added ALIEN_JDL_SITEARCH setting to select between MI50, MI100, NERSC (so we do not need custom run scripts per site). Test jobs with new O2DPG are failing, need to investigate why.

- For tests at NERSC, GRID people need to set up a queue such that we can submit GRID jobs that request 64 cores and GPU access.

GPU ROCm / compiler topics:

- Issues that disappeared but not yet understood: random server reboot with alma 9.4, miscompilation with ROCm 6.2, GPU getting stuck when DMA engine turned off, MI100 stalling with ROCm 5.5.

- Problem with building ONNXRuntime with MigraphX support.

- Need to find a way to build ONNXRuntime with support for CUDA and for ROCm.

- Try to find a better solution for the problem with __device__ inline functions leaking symbols in the host code.

- LLVM Bump to 20.1: status?

- ROCm 6.4.1 status:

- AMD is checking the reproducer. I have some idea how to narrow down where it miscompiles using different compile flags in per-kernel mode.

- New problem with ROCm 6.5 / 7.0 after bumping clang: New clang encounters internal compiler error processing our code...

- ROCm 7.0 introduces some non-backward-compatible changes. Might need code changes in O2, and synchronized bump of ROCm on EPNs, in build and CI container, and in O2/dev.

TPC / GPU Processing

- WIP: Use alignas() or find a better solution to fix alignment of monte carlo labels: https://its.cern.ch/jira/browse/O2-5314

- Waiting for TPC to fix bogus TPC transformations for good, then we can revert the workaround.

- Waiting for TPC to check PR which uses full cluster errors including average charge and occupancy map errors during seeding.

- Final solution: merging transformation maps on the fly into a single flat object: Draft version by Sergey exists but still WIP.

- Matthias Kleiner might look into commissioning this.

- Pending OpenCL2 issues:

- printf not working due to confirmed bug in clang, fix is being prepared. Prevents further debugging for now.

- Crash in merger, which can be worked around by disabling clang SPIRV optimization. Probably bug in clang, but need to fix printf first to debug.

- Also with optimization disabled, crashing later in TPC merging, need printf to debug.

- printf not working due to confirmed bug in clang, fix is being prepared. Prevents further debugging for now.

- Need to check the problem with ONNX external memory allocator.

- Next high priority topic: Improvements for cluster sharing and cluster attachment at lower TPC pad rows. WIP PR: https://github.com/AliceO2Group/AliceO2/pull/14542

EPN GPU Topics:

-

10:20

→

10:25

TPC ML Clustering 5mSpeaker: Christian Sonnabend (CERN, Heidelberg University (DE))

Framework

- ONNX: Non-deterministic compute on GPU

- More global issue. Tried all variations, but even in float32 using

(mPImplOrt->sessionOptions).AddConfigEntry("session_options.use_deterministic_compute", "1");

the compute was not deterministic. - Investigation in GitHub threads and forums shows that this is indeed not guaranteed by ONNX (or any other framework), not even during training!

- SetDeterministicCompute: "... If set to true, this will enable deterministic compute for GPU kernels where possible. ..."

- More global issue. Tried all variations, but even in float32 using

- Variations (using only the classification network with fixed threshold):

- L2, N16: 276782342 \pm 105 ~ 3.8x10e-5% deviation

- L5, N128: 265601210 \pm 111 ~ 4.2x10e-5% deviation

GPU timing test

(see debugLevel=0 folder) Classification network + CF regression-> Larger batch size is better, but also: Almost no difference in wall time from L2_N16 to L5_N32 for almost any batchsize -> L5_N32 will be the network of choice(see debugLevel=1 folder) Classification network + CF regression-> Larger batch size is better. Increase from L2_N16 to L5_N32 is factor 1.4 but typically largest jumps noticeable from 32 to 64 neurons per layer (potentially connected to cross-warp computations?)Memory consumption increases with larger batchsize: Input = BS x sizeof(float16) x (e.g. 3x9x9 + 3) x #streams + ONNX. For batchsize of 262144, 3 streams: Input ~387MB + ONNXPhysics- Did the QA on the ideal clusters again now compared to ~1.5 years ago

- Changes in between:

- Added looper tagging

- Changed peak finder to the peak finder of the GPU cluster finder (for training data generation)

- Using native clusters from reconstruction, not the clusters generate in the QA macro

- Using simulation which enforces 0-5% centrality, so efficiencies go down a bit, fake-rates go up a bit

- Network size = classification network size, Regression algorithm = GPU CF regression

Raw efficiency without loopers: How many clusters have been attached to at least one ideal (MC) cluster, removing all (local) regions in loopers

- NN can never attach more clusters than GPU CF because it won't produce more clusters (it can only reject)

Another possibility: #(attached ideal clusters, not in looper tagged region) / #reconstructed clusters

- Can be artificially inflated with number of loopers, but in all cases network should reject looping clusters better, so efficiency should go up

To-Do

- Create standalone reproducer for ONNX non-determinism

- Create Efficiency-Fake plots with regression network

- ONNX: Non-deterministic compute on GPU

-

10:25

→

10:30

GPU Parameter Optimizations 5mSpeaker: Gabriele Cimador (Universita e INFN Torino (TO))

News from GPU parameter tuning

- Used

GPUCA_KERNEL_RESOURCE_USAGE_VERBOSE 1 - For 750kHz pp dataset, tuner selected as best configuration

block_size = 512, grid_size = 540forGMMergerCollect - This translated to

512, 9on the parameter header --> try to fit 9 blocks of 512 threads each on each CU - What

GPUCA_KERNEL_RESOURCE_USAGE_VERBOSEsaid: Occupancy [waves/SIMD]: 9 - On MI50: 4 SIMD per CU

- Number of threads residing on the GPU at the same time: 64 threads per wave * 9 waves per SIMD * 4 SIMD per CU * 60 CUs in a MI50 = 64 * 9 * 4 * 60 = 138 240

- Number of requested threads: 512 threads per block * 9 blocks per CU * 60 CUs in a MI50 = 276 480

- Actual threads / Requested threads = 138 240 / 276 480 = 0.5

- So half grid can reside on the GPU

HS23 Contribution from ALICE

Chatted with Robin yesterday. They are prone to use the CI container directly.

CUDA compatibility issue

From Nvidia docs:

-

CUDA Compatibility guarantees allow for upgrading only certain components.

-

Backwards compatibility ensures that a newer NVIDIA driver can be used with an older CUDA Toolkit.

-

Minor version and forward compatibility ensure that an older NVIDIA driver can be used with a newer CUDA Toolkit (until certain version).

-

-

FAQ: Does CUDA compatibility work with containers? Yes, when using containers that are based on the official CUDA base images.

What's next

- Write script to run the benchmark within the CI container

- Test this POC on one of their GPUs:

GPU model Driver version Tesla T4 575.57.08 (CUDA Version: 12.9) A100 575.57.08 (CUDA Version: 12.9) V100S 560.35.05 (CUDA Version: 12.6)

Question

Differences between sync and async run of the benchmark, except from RTC and compression/decompression?

- Used

-

10:30

→

10:35

Efficient Data Structures 5mSpeaker: Dr Oliver Gregor Rietmann (CERN)

Summer Student Milla Bramsted

- She is working on benchmarking SoA code on GPUs

- We will track her project in this google doc

- She is adding CUDA kernels to this repo

- Three CUDA kernels are now running in our benchmark framework

- Next steps:

- Run the same kernels for different data layouts

- Add the other NGT SoA approaches again (were temporarily removed)

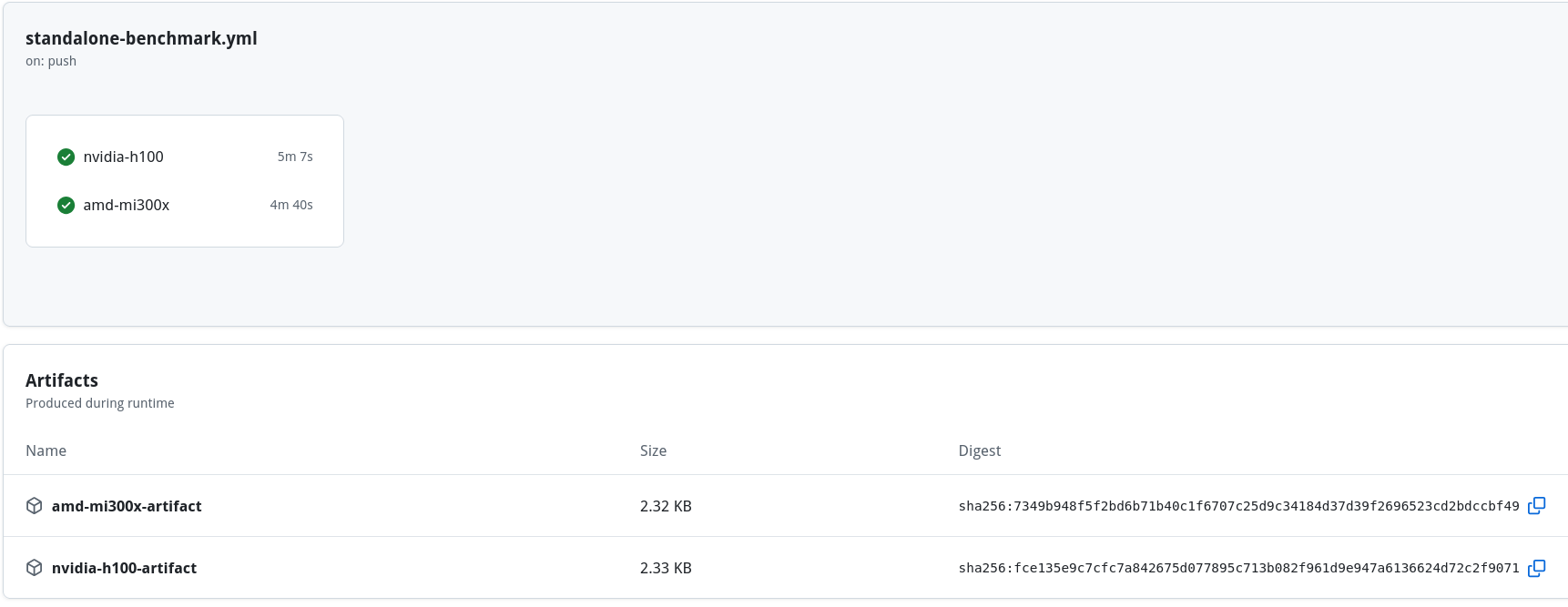

ALICE O2 CI-Pipelines on NGT Cluster

- A fork of the AliceO2 repo is not in the NextGenTrigggers (NGT) GitHub organization

- It has a GitHub action running the standalone benchmark on NGT GPUs (H100)

- Uses the builds in /cvmfs/alice.cern.ch/ of O2 and dependencies (pipeline takes about 7 minutes)

- Different GPUs are tested in parallel on different VMs

- O2 standalone benchmarks now works on the following ngt-resources:

- Nvidia H100 188GB NVL

- AMD Instinct MI300X

- AMD Radeon Pro W7900: Works, but is a bit hacky, need to improve

- Nvidia L40S: Works fine, but needs to be added to the CI

- Next steps:

- Find a better solution for W7900

- Add L40S to CI (trivial)

- Avoid some code repetition in the github workflow file

Implement NGT SoA Code in O2 standalone benchmark

- Working on this fork of the AliceO2 repo

- Simplified and optimized the SoA code in the last few weeks

- Everything is running and we have identified the classes apply our SoA code to

- Next steps:

- Discuss these classes with David

- Implement the SoA code on those classes

-

10:35

→

10:40

Following up GPU to-dos 5mSpeaker: Dr Vikas Singhal (Department of Atomic Energy (IN))

-

10:40

→

10:45

TPC Clusterization / OpenCL / Highly Ionizing Particles 5mSpeaker: Felix Weiglhofer (Goethe University Frankfurt (DE))

OpenCL

Clang printf

Upstreamed fix to LLVM: https://github.com/llvm/llvm-project/pull/150210

-> Merged into main branch (LLVM 22) and backported to LLVM 21

-> Branch for LLVM 20 closed, no more releases planned

PoCL

> Sometimes statements like

if (iThread == 0) printf("...");are printed by all threads. Can be fixed with a barrier after the print.Bug report: https://github.com/pocl/pocl/issues/1977

Bug confirmend, but PoCL devs are rewriting their loop vectorizer.

New loop vectorizer: Fixes this particular issue.

However, full decoder kernel runs into endless loop / deadlock with it, so can't test original issues yet.

Working on reproducer for deadlock now.

-

10:45

→

10:50

ITS Tracking 5mSpeakers: Felix Schlepper (CERN, Heidelberg University (DE)), Gabriele Cimador (CERN, Università and INFN Torino), Matteo Concas (CERN)

TODOs (help welcome)

- validate new features if they also work on GPU

- deltaROF tracking currently not working have to check why and fix

- otherwise everything used in production should be there

- porting Vertexing code to GPU (in progress)

- optimize GPU part:

- `started` using multiple streams very much in its infancy

- optimise load times/processing etc. (probably [not measured] parts could be improved)

- optimise number of blocks/threads per kernel (for Pb-Pb + pp sep., fairly small number of kernels to be optimised)

- Aranged meeting with Gabriele (after his vacation)

- How difficult is it to also set up RTC for ITS kernels?

- Have a CI procedure/periodic check that runs ITS GPU reconstruction and then gets some automatic checks and numbers (deterministic mode is more important)

- Ensure gpu-reco-workflow with ITS enabled are the same that one gets from its-reco-workflow --gpu

- Erroneous assumption in reproducer, for ITS cluster squashing (merging of clusters across readout-frames) has been disabled online, effectively disabling perfect compression and increasing storage requirements, in async prod. squashing is enabled though (of course)

- added env var to reclusterize in dpl-workflow.sh

- could test for pp processed 1000 TFs with TPC, ITS successfully

- PbPb might be problematic, need to understand when GPU memory is freed using the framework allocator

- saw an increase in dropped TFs (dropped meaning ITS code exceeded avail. memory, caught the std::bad_alloc and outputted 0 tracks, not crashing processing) using TPC, ITS GPU in high int. rate (40kHz) Pb-Pb(request by David&Vasco for some ALICE3 hardware estimates with current algorithm, we have some ideas but need to refine a bit)

-

10:50

→

10:55

System Run Coordination Topics 5mSpeaker: Ernst Hellbar (CERN)

-

10:00

→

10:20